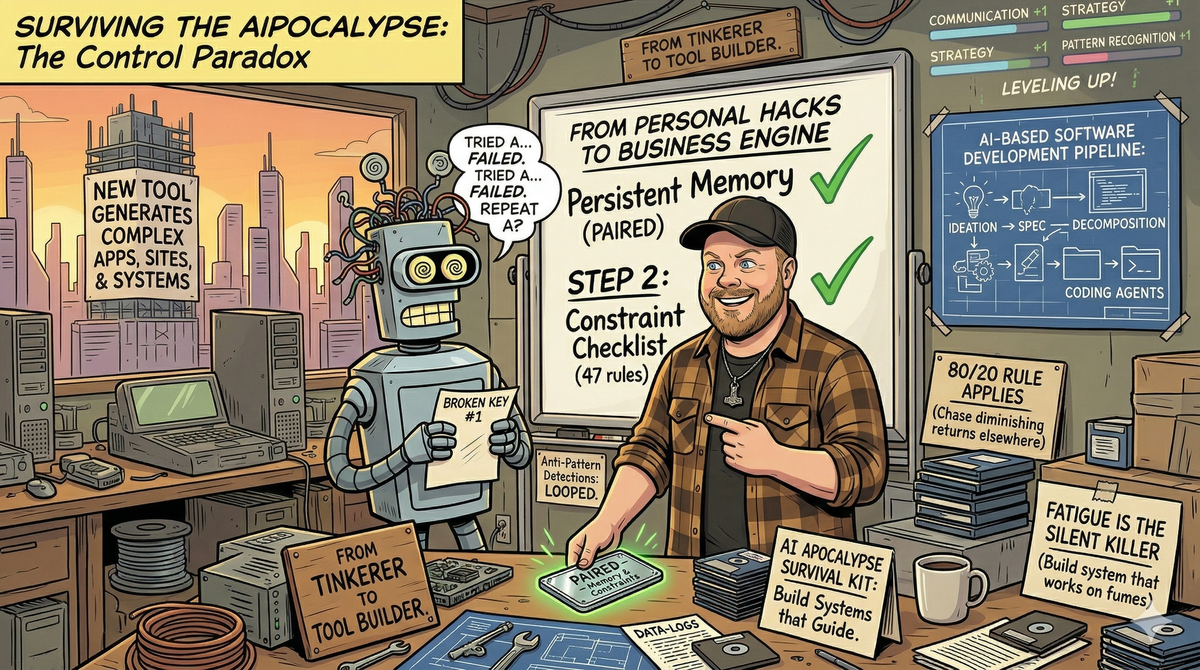

From AI Tinkerer to Tool Builder: Three Years of Surviving the AIPocalypse

Three years ago, I made a decision that would reshape everything: if I was going to survive the coming AIPocalypse, I would need to control the AI, or the AI would control me.

It started at Seattle's AI Tinkerers meetups—rooms full of engineers, entrepreneurs, and curious minds all asking the same question: "What happens when this gets really good?" While others debated timelines and existential risks, I was already building. The answer wasn't to fear the wave; it was to learn to surf it.

The Loop Problem

Anyone who's worked with coding LLMs knows the frustration. You give it a task, it tries solution A. Fails. Tries solution B. Fails. Goes back to solution A. Fails again. It's like watching someone try to unlock a door with the same broken key, over and over, convinced that this time it will work.

That's when I built PAIRED—my first serious attempt at overriding the default agent behavior inside development environments. It wasn't pretty, but it taught me something crucial: raw prompting isn't enough. You need guardrails. You need memory. You need to track anti-patterns.

If we've tried something multiple times and failed, we need to look forward, not backward.

From Personal Tools to Business Engine

What began as personal productivity hacks evolved into something bigger. Those ChatGPT and Claude.ai projects that solved my daily annoyances? They revealed patterns that could scale beyond just me. I wasn't just building tools—I was building a system for building tools.

The breakthrough came when I developed an AI-based software development pipeline: ideation flows into spec planning, which feeds into decomposition, which passes clean instructions to coding agents. Each layer adds structure, each checkpoint prevents the infinite loops that plague direct prompting.

Now I have tooling that generates complex apps, sites, and entire systems. Sure, it still hiccups when I get sloppy with prompting after a long day. But the difference is night and day.

Hard-Won Lessons from the Trenches

Three years of building AI tools taught me things no tutorial covers. Here's what really matters:

1. Memory Beats Intelligence Every Time

The smartest AI in the world is useless if it can't remember what didn't work. I spent months building increasingly sophisticated prompts before realizing the real problem: context loss. Now every tool I build starts with persistent memory. Track attempts, failures, and especially the subtle variations that matter. Your AI doesn't need to be smarter—it needs to remember being dumber.

2. Constraints Create Freedom

Counter-intuitive but true: the more constraints I added, the more creative the outputs became. Unlimited possibility is paralyzing for humans and AIs alike. Give an AI 47 formatting rules and watch it excel within those bounds. The magic happens in the margins, not in the void.

3. Anti-Pattern Detection Is Your Superpower

Most people focus on positive patterns—what works. I learned to obsess over negative patterns—what consistently fails. When your AI tries the same broken approach three times, that's not persistence, that's stupidity. Build systems that recognize "we've been here before" and force exploration of untried paths.

4. The 80/20 Rule Applies to AI Prompting

20% of your prompt engineering effort will solve 80% of your problems. The remaining 80% of effort chases diminishing returns. Learn to recognize when you're polishing versus when you're problem-solving. Stop perfecting prompts that are already good enough.

5. Fatigue Is the Silent Killer

Your prompts degrade as you get tired. Period. When I'm sharp, I write precise, structured instructions. When I'm exhausted, I write vague requests and wonder why the output is garbage. Build systems that compensate for human fatigue—templates, checklists, and fallback patterns that work even when you're running on fumes.

6. Composition Beats Complexity

Instead of building one mega-prompt that does everything, build small components that chain together. Each component has one job and does it well. This makes debugging trivial, optimization targeted, and scaling natural. Think Unix pipes, not Windows registry.

7. The Human-AI Handoff Is Everything

The transition points between human judgment and AI execution determine your success rate. Make these handoffs explicit. When does human oversight matter? When can the AI run autonomous? When do you need human creativity versus AI consistency? Map these boundaries clearly, or you'll waste time on both sides.

8. Version Everything, Trust Nothing

AI outputs are non-deterministic. Something that worked yesterday might fail today for reasons you'll never understand. Version your prompts, your data, your outputs, and especially your failures. The day you need to roll back to a working state, you'll thank yourself for the paranoia.

9. Start Boring, Get Weird Later

Every tool I built that lasted started with the most mundane use case imaginable. The flashy, creative applications came later. Build something that solves a boring problem really well, then let it evolve toward interesting problems. Boring problems have clear success criteria; interesting problems have moving goalposts.

10. The Best Tool Is the One You Actually Use

I built dozens of tools that were technically impressive and practically ignored. The ones that stuck weren't the cleverest—they were the ones that integrated seamlessly into my existing workflow. If it requires me to change how I work, it's already half-dead. Build tools that enhance existing habits, don't replace them.

The Video Game Metaphor

Building these tools taught me something unexpected: working with AI is like leveling up in an RPG. You don't just improve one skill—you develop multiple capabilities in parallel.

Your prompt engineering improves your "communication" stat. Building memory systems levels up your "strategy" skill. Learning to spot anti-patterns boosts your "pattern recognition" ability. Each improvement unlocks new possibilities, makes you more effective across every domain.

This isn't just about coding. This is about building the cognitive scaffolding that will matter when AGI arrives.

The Control Paradox

The irony isn't lost on me: to control AI, I had to give up control. Instead of trying to micromanage every output, I built systems that guide behavior. Instead of fighting the randomness, I created structures that channel it productively.

The tools don't replace human judgment—they amplify it. They don't eliminate mistakes—they make them faster to catch and cheaper to fix. They don't make me obsolete—they make me more capable than I've ever been.

That's the real insight from three years of building: the AIPocalypse isn't about humans versus machines. It's about augmented humans versus unaugmented humans. The gap between those two groups is about to become an ocean.

What's Next

We're building toward something unprecedented. Every tool I create, every anti-pattern I document, every feedback loop I optimize—it's all preparation for the moment when AI capabilities make another quantum leap.

The question isn't whether that moment is coming. The question is whether you'll be ready to surf the wave, or whether you'll be swept away by it.

Time to level up.